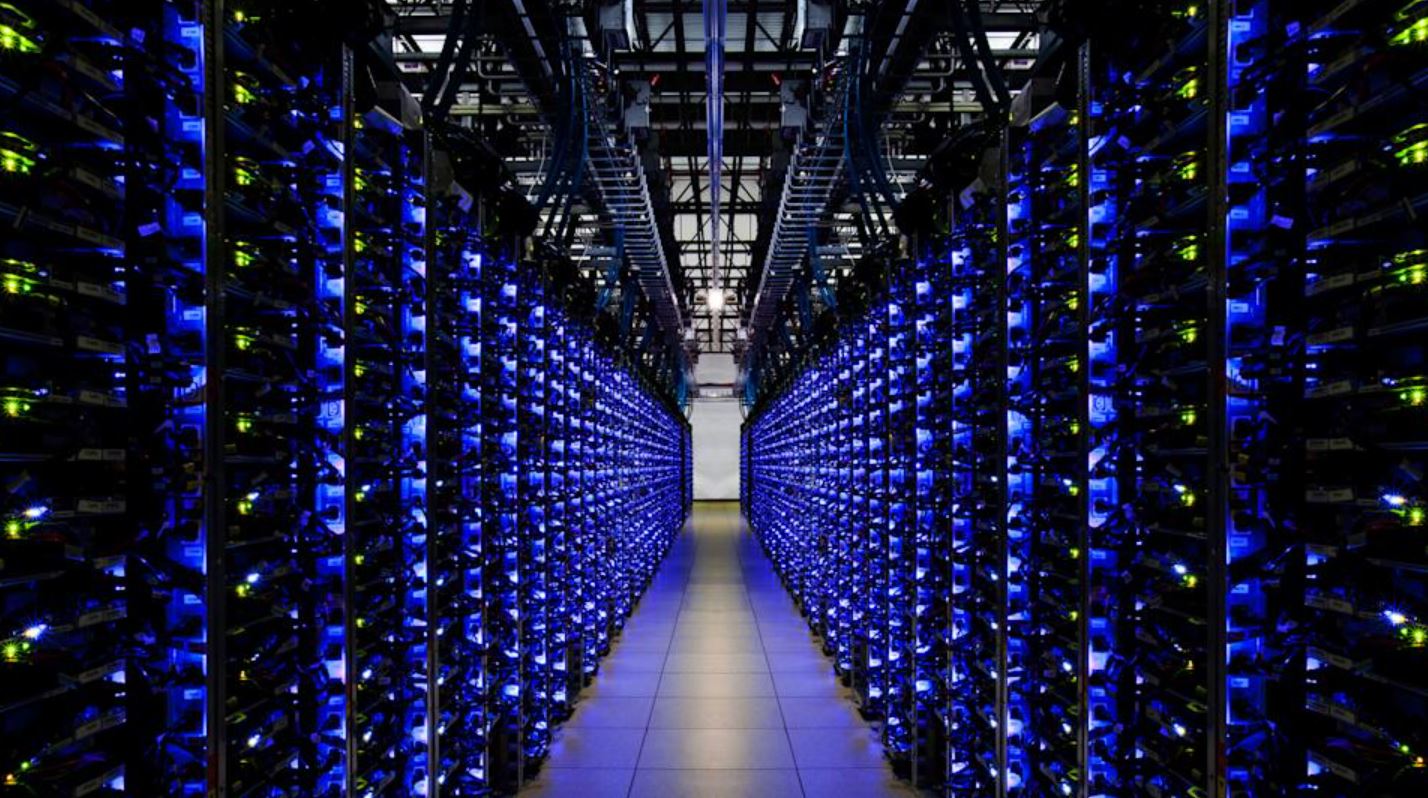

Once upon a time, China’s ambition to lead the AI world seemed unstoppable. The nation was buzzing with excitement, fueled by an influx of Nvidia GPUs despite U.S. export barriers. These Nvidia H100 chips were so in demand that they fetched up to 200,000 yuan ($28,000) on the black market. To support this AI surge, data centers popped up all over the country.

Once upon a time, China’s ambition to lead the AI world seemed unstoppable. The nation was buzzing with excitement, fueled by an influx of Nvidia GPUs despite U.S. export barriers. These Nvidia H100 chips were so in demand that they fetched up to 200,000 yuan ($28,000) on the black market. To support this AI surge, data centers popped up all over the country.

But here’s where the story takes a turn. A recent report from MIT Technology Review reveals that China’s AI boom is losing steam. The government funding that once fueled this growth has dried up, leaving project leaders in a tough spot, trying to sell off extra GPUs and unused facilities. It turns out, the rapid construction of AI infrastructure wasn’t as well thought out as it should have been, with a gap between what was built and what was actually needed.

Many data centers were put up without a clear understanding of the distinct needs of AI training versus inference workloads, leading to inefficiencies. Alibaba’s CEO even mentioned that this has created a significant bubble in the industry. The focus was heavily on training capabilities, which require lots of computational power, rather than on inference, which doesn’t. This flooded the market with high-end GPUs.

Some companies took advantage of AI data centers to secure government-subsidized green energy or land deals. In some cases, electricity meant for AI tasks was sold back to the grid for profit, and developers got loans and tax breaks while their facilities sat idle. It seemed like many investors were more interested in policy benefits than in genuine AI development.

Last year, 144 companies registered with the Cyberspace Administration of China to create Large Language Models (LLMs), but by the end of the year, only about 10% were still investing in LLM training. Jimmy Goodrich, a senior advisor at RAND Corporation, pointed out that the industry’s growing pains were due to inexperienced players—corporations and local governments—jumping in on a hype-driven journey without the right facilities for the current needs.

China’s AI lab, DeepSeek, added to the challenges. Recently, DeepSeek’s large language model outperformed American AI giants, defying U.S. efforts to curb China’s tech ambitions. DeepSeek’s R1 model surpassed those from OpenAI, Meta, and Anthropic, yet its V3 model training cost was just $5.6 million compared to the vast sums spent by U.S. labs. Despite U.S. semiconductor restrictions, this Chinese lab has prompted AI firms to rethink their hardware and scale requirements.

Wall Street remains optimistic about electricity demand growth, even with the emergence of more efficient DeepSeek AI models. Bloomberg Intelligence utilities analyst Nikki Hsu noted, “Demand is definitely going to rise, but by how much, we don’t know.” DeepSeek’s efficiency might spur broader AI adoption. According to Carlos Torres Diaz from Rystad Energy, data centers could process more data with increased efficiency. The Electric Power Research Institute (EPRI) predicts that data centers will consume up to 9% of U.S. electricity by the decade’s end, up from the current 1.5%, driven by the rapid uptake of power-intensive technologies like generative AI. For context, the U.S. industrial sector consumed 1.02 million GWh last year, making up 26% of the nation’s electricity use.

It’s clear that China’s journey in AI is a complex one, filled with both ambition and challenges. Whether the bubble will burst or evolve into something more sustainable is still unfolding.