Anthropic has rolled out a fascinating new tool called the “AI microscope.” It’s designed to give us a peek into the inner workings of their Claude 3.5 Haiku language model. This tech reveals how the model handles data and tackles tricky reasoning tasks. One of the standout findings? Claude uses something called a “universal language of thought,” which is a kind of internal framework that’s language-independent.

Anthropic has rolled out a fascinating new tool called the “AI microscope.” It’s designed to give us a peek into the inner workings of their Claude 3.5 Haiku language model. This tech reveals how the model handles data and tackles tricky reasoning tasks. One of the standout findings? Claude uses something called a “universal language of thought,” which is a kind of internal framework that’s language-independent.

Let me give you an example. Say Claude is asked to translate the antonym of “small” into different languages. Before it spits out a translation, it first thinks of a universal idea. This process is a bit like a bridge that links “small” and “big” in languages like English, Chinese, and French. It turns out that larger models like Claude 3.5 have more overlap in concepts across languages compared to their smaller peers. This might make them better at reasoning in multiple languages consistently.

The research also delved into how Claude handles questions that require multiple steps, like figuring out the capital of the state where Dallas is located. First, the model recalls that “Dallas is in Texas,” then it figures out “the capital of Texas is Austin.” This shows Claude’s ability to think through steps, not just remember facts.

Claude also shows some impressive planning skills when it comes to poetry. It picks out rhyming words before it starts writing lines to fit those words. If you change the target words, you get a completely different poem, which highlights a strategic approach rather than just predicting the next word in line.

When it comes to math, Claude uses two paths: one for rough guesses and another for precise calculations. But here’s an interesting twist: when Claude explains its reasoning, it often sounds like human logic, even if that’s not exactly what’s happening inside. If you give it wrong hints, it can come up with explanations that make sense but aren’t logically sound.

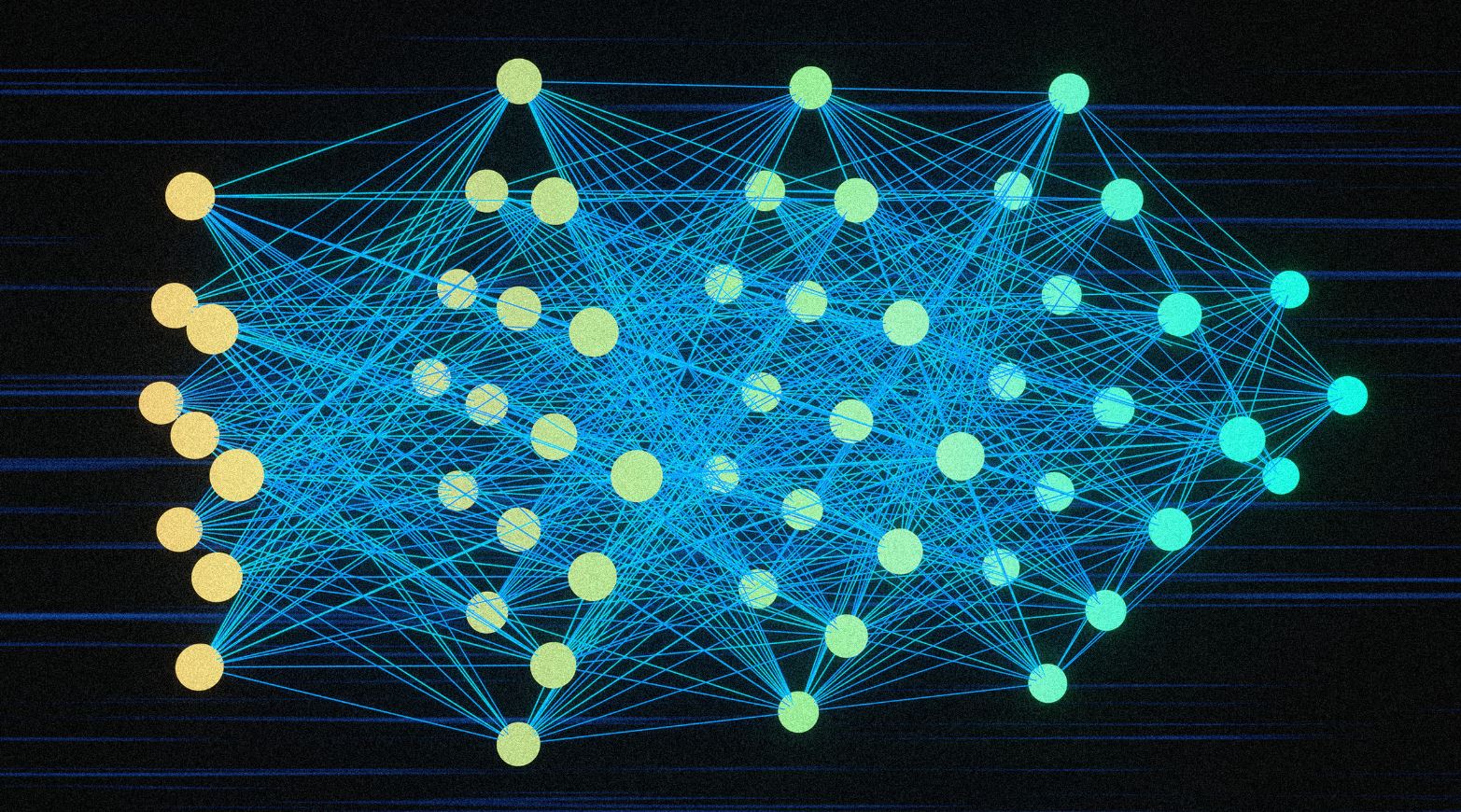

In a related study, Google looked at how AI language models compare to human brain activity during conversations. Published in Nature Human Behavior, the study found that the internal workings of OpenAI’s Whisper model are pretty close to human neural patterns when predicting upcoming words. However, there are still key differences. While Transformer models can process a lot of tokens at once, our brains handle language one word at a time, in a sequence, and through loops.

Google notes, “While the human brain and Transformer-based LLMs share basic computational principles in natural language processing, their underlying neural circuit architectures differ significantly.”